K8S Calico vs Cilium

Among Kubernetes CNI plugins, the two names compared most often are Calico and Cilium.

Both handle Pod-to-Pod communication, but their approaches could not be more different.

Calico relies on traditional Linux networking — iptables and BGP — to build a simple and predictable L3 network.

Cilium, on the other hand, uses eBPF to control traffic directly inside the kernel, extending visibility and policy enforcement all the way to L7.

Ultimately, this comparison is not about “which CNI is faster” but rather:

“How does the operator want to understand and manage the network?”

Calico offers familiarity and stability, while Cilium provides observability and advanced security.

1. The Two Projects Started From Different Philosophies

Calico began with a philosophy of “not modifying the fundamental nature of Linux networking.”

It implements Kubernetes networking on top of iptables and BGP — the native language of existing networks.

As a result, you get a simple, predictable L3-based CNI where operators can easily see how packets flow within the system.

Cilium was created from a completely different perspective.

It takes full advantage of the newly introduced eBPF (extended Berkeley Packet Filter) in the Linux kernel.

Instead of sending packets to iptables, Cilium processes them directly inside the kernel.

In other words, Cilium controls the data plane at eBPF hook level, treating the kernel itself as an extended networking engine.

2. Internal Architecture — iptables vs eBPF

| Category | Calico | Cilium |

|---|---|---|

| Data Plane | iptables / nftables | eBPF |

| Control Plane | Felix, Typha, BGP | Cilium Agent / Operator |

| Policy Engine | Kubernetes NetworkPolicy (L3/L4) | CiliumNetworkPolicy (L3–L7) |

| Performance | iptables rules degrade with size | eBPF maps offer O(1) lookup |

| Visibility | Basic flow logs | Hubble: L3–L7 visibility |

Calico directly manipulates iptables.

As the number of rules grows into the thousands, performance degrades because iptables checks rules sequentially (O(n)).

Cilium stores policies in BPF maps (hash tables), allowing near constant-time lookups (O(1)).

This difference becomes crucial in large-scale, multi-tenant environments.

3. Fundamental Differences in Policy Model

Calico follows the standard Kubernetes NetworkPolicy — L3/L4 controls based on IPs and ports.

Cilium goes further with native L7 policies.

For example:

“Pod A can call only the

/api/v1/metricsendpoint of Pod B.”

This is possible because Cilium can parse HTTP traffic through eBPF.

This is not merely “more detailed security”; it means Cilium enables logical traffic control within microservices.

4. Operational Differences You Actually Feel

Right after installation, Pod-to-Pod traffic in Cilium does not appear in iptables traces.

Instead, tools such as bpftool map show or hubble observe are used.

→ The entire troubleshooting toolchain is different.

Calico lacks a native Hubble-like system for L7 flow visualization.

Operators rely on syslog-based flow logging.

Calico has very low kernel version dependency.

Cilium requires modern kernels (5.x or higher) because eBPF hooks evolve with kernel changes.

Debugging difficulty

- Calico →

iptables -L -n -vprovides a familiar view. - Cilium → You use

cilium monitor,cilium status,hubble observe, which require some learning.

Summary:

Calico suits operators comfortable with Linux networking,

while Cilium suits teams focused on cloud-native observability and zero-trust security.

5. Practical Selection Guide

| Environment | Recommended |

|---|---|

| Legacy / On-prem with older kernels | Calico |

| High observability needs (L3–L7, zero-trust) | Cilium |

| L7 security policies, endpoint-level control | Cilium |

| Predictable L3 routing with stable performance | Calico |

| Want L7 observability without Service Mesh | Cilium (with Hubble) |

Choose based on the problem you intend to solve, not on novelty.

The learning curve for Cilium can be steep — but once adopted, it becomes a platform-level security and visibility layer rather than just a CNI.

6. Conclusion — Cilium Beyond CNI, Calico Still Highly Relevant

Calico remains an excellent choice:

simple design, strong BGP integration, predictable performance, and full operator control.

Cilium follows a different path.

It is not just a CNI but an OS-level traffic engine that observes, controls, and protects workloads through eBPF.

It shifts networking from “packet-based” to “intent-based.”

7. Flow Comparison

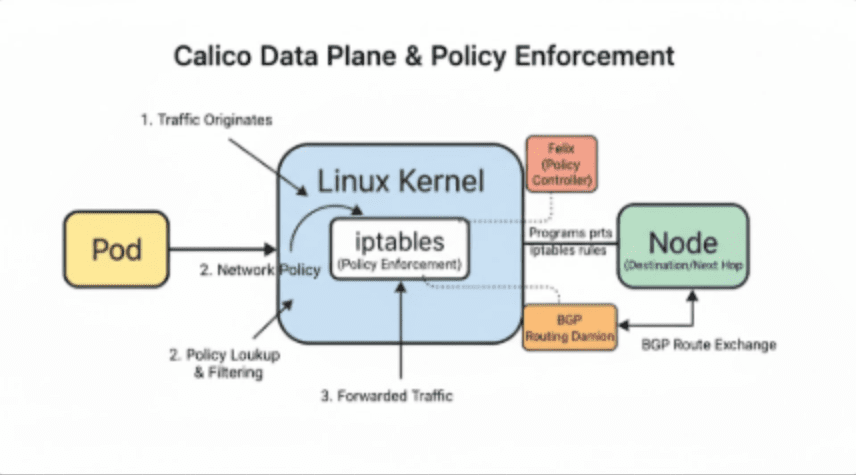

Calico Data Plane & Policy Enforcement (BGP Routing Mode)

Calico can operate with IP-in-IP, VXLAN, or BGP.

The example here assumes the BGP mode.

Calico uses iptables as its core enforcement engine. Let’s follow a packet:

- Traffic Originates

Packets generated in a Pod enter the Linux kernel via the veth interface. - Policy Lookup & Filtering

iptables rules managed by the Felix agent determine which Pods can talk to each other and which ports are allowed. - Forwarding

After passing policies, packets follow kernel routing tables.

Calico exchanges these routes through BGP to determine the next-hop node. - Node-to-Node Communication

The destination node receives the packet, applies its own iptables rules, and delivers it to the target Pod.

In short:

Pod → Linux Kernel (iptables) → BGP-based routing → Destination Node → Target Pod

As the number of iptables rules grows, the sequential matching leads to increased CPU overhead.

This simple yet transparent L3 model is Calico’s core identity.

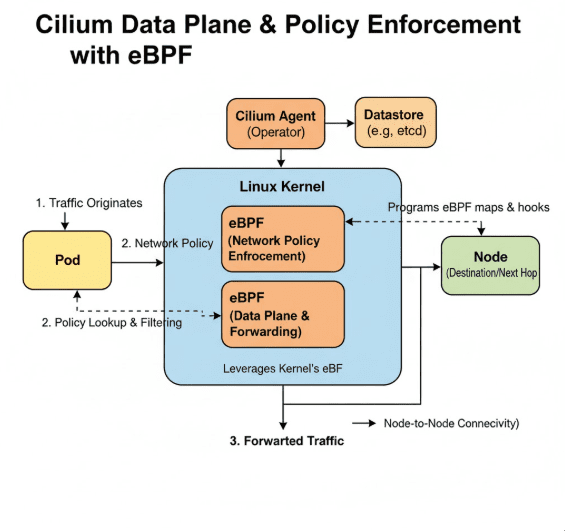

Cilium Data Plane & Policy Enforcement (eBPF)

Cilium does not use iptables.

Instead, it applies policies and forwarding logic through eBPF inside the kernel.

Follow the packet:

- Traffic Originates

Pod traffic enters the kernel via the veth interface. - Policy Lookup & Filtering

An eBPF program checks policies stored in BPF maps (hashmaps), loaded by the Cilium Agent.

No sequential rule scanning is needed. - Forwarding

The eBPF data plane processes and forwards packets directly.

Node-to-node connectivity can be VXLAN, Geneve, or direct routing. - Node-to-Node Connectivity

Each node routes traffic using eBPF logic, kept consistent by the Cilium Agent and Operator — without requiring BGP.

In short:

Pod → eBPF policy → eBPF data plane → Node → Target Pod

All processing happens in-kernel, avoiding context switches.

Policy lookups are O(1), updates are instantaneous, and traffic visibility extends up to L7 through Hubble.

🛠 마지막 수정일: 2025.11.13

ⓒ 2025 엉뚱한 녀석의 블로그 [quirky guy's Blog]. All rights reserved. Unauthorized copying or redistribution of the text and images is prohibited. When sharing, please include the original source link.

💡 도움이 필요하신가요?

Zabbix, Kubernetes, 그리고 다양한 오픈소스 인프라 환경에 대한 구축, 운영, 최적화, 장애 분석이 필요하다면 언제든 편하게 연락 주세요.

📧 Contact: jikimy75@gmail.com

💼 Service: 구축 대행 | 성능 튜닝 | 장애 분석 컨설팅

💡 Need Professional Support?

If you need deployment, optimization, or troubleshooting support for Zabbix, Kubernetes, or any other open-source infrastructure in your production environment, feel free to contact me anytime.

📧 Email: jikimy75@gmail.com

💼 Services: Deployment Support | Performance Tuning | Incident Analysis Consulting

답글 남기기

댓글을 달기 위해서는 로그인해야합니다.